Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

PTP/designs/rm framework

Contents

Overview

This page describes the new design for the PTP resource manager monitoring/control framework. The motivation for providing a new framework is primarily because the existing RM infrastructure (both model and UI) will not scale and is not flexible enough to encompass all machines that PTP wishes to target.

The purpose of this framework is to:

- Collect and display monitoring information relating to the operation of a target system in a scalable manner

- Provide job submission, termination, and other job-related operations

- Support debugger launch and attach

- Enable the collection and display of stdin and transmission of stdout information from running jobs (where supported by the target system)

Monitoring information will comprise:

- The status and position of user's jobs in queues

- Job attribute information

- Target system status and health information for arbitrary configurations

- The physical/logical location of jobs on the target system

- Predictive information about job execution

Key attributes of the framework include:

- Support for arbitrary system configurations

- Support for all existing resource managers

- The ability to scale to petascale system sizes and beyond

- Support for both user-installable and system-installable modes of operation

- Automated installation for user-installable operation

- Simple to add support for new resource managers

- Eliminate the need for compiled proxy agents

Rationale

The existing RM design is documented in the PTP 2.x Design Document. The main issues with the existing RM design fall into the following areas:

- Model scalability and flexibility

- UI scalability

- Complexity of adding new RM support

Model Scalability and Flexibility

PTP employs a MVC architecture for monitoring job and system status. The model is used to represent the target system and the jobs that are running on that system. The model receives updates from the proxy agents running on the target system.

Currently, the model provides a fixed hierarchy in which machines are comprised of nodes, and (resource manager) queues contain jobs. A job has one or more processes, which are running on specific nodes.

One problem with this approach is that model hierarchy is inflexible and can't be used to easily represent more complex architectures (e.g. BG). Although it is possible to map the architecture into machines/nodes, the user may wish to see the actual physical layout of the machine. Also, machines often have physical and logical layouts which should be visible to the user.

Another issue is that the model currently represents the entire system down to the individual process level. This is clearly going to have scaling issues with node/core counts in the hundreds of thousands and process counts in the millions.

The model is really only required for visualizing the system and job status on a target system. A better approach is to have a model that is tailored for this visualization, and that only models the currently visible aspects of the system.

UI Scalability

UI scalability is also a significant concern if PTP is to support petascale (and beyond) systems. The current runtime views display the model using individual icons to represent machines, nodes, jobs, etc. This has been recognized as a potential scaling issue for some time, and although some optimizations have been made, recent scaling tests have shown that the UI will be a major issue in achieving effective scaling.

The ability to scale the UI is going to require a combination of a compact representation for displaying system status, along with a drill down approach for visualizing more detailed information. In addition, it should be possible to provide different views of the same system, such as a physical and logical view. Finally, the UI should continue to link job information with system system in a way that provides meaningful information to the user.

New RM Support

Adding support for a new RM is currently a fairly significant undertaking, requiring the development of a proxy agent that interacts with the job scheduler via commands or APIs. The agent must convert the system-specific information into a protocol using a low-level protocol API. On the client side, additional work is required to provide a launch configuration UI that will allow the user to select the parameters/attributes for the launch.

An approach that simplifies this process is highly desirable, as it would enable PTP to support a broader range of systems, and hopefully expand adoption. The recently developed PBS RM has some features that help this process (much of the RM is job scheduler neutral), but the effort required is still significant. The goal here is to be able to add a new RM without any coding (e.g. via an XML description), or at least minimize the coding to a very small component.

Design

The RM framework is separated into control and monitoring components. Control and monitoring operations are independent and can operate without requiring interaction between the components.

A detailed guide to the XML Schema for the new Configurable Resource Manager, along with an introductory tutorial slide-set demonstrating some simple modifications to an existing XML definition, are now available at PTP/Resource_Manager_Configuration.

RM Control

Control operations are used to control the submission and interaction with user initiated jobs.

- Job attribute discovery

- Job submission

- Job-related commands (e.g. termination)

- Debug session launch

- Stdin/stdout forwarding

Control using current proxy protocol

The control operations are not affected by the scaling problems described above. Thus for existing RMs it would be OK to leave the current Proxy Protocol based RM for the control operations. To allow easy development of new RMs, it is important that the Control part of a new RM can be added using a few scripts and XML files.

RM Monitoring

The monitoring operations consist of:

- System configuration discovery

- System and job status change notification

- View change notification

Monitoring based on LLView

The RM Monitoring could be largely based on LLView. LLView has scalable views and a back-end in Perl which supports PBS and LoadLeveler and seems easy extensible for other RMs (also in other languages than Perl). The scaling problem in the data communication is addressed by only communicating the information up to some level of detail which doesn't produce too much data.

Things currently not supported

- Requesting additional information for specific racks/nodes/processes/jobs/...

- Sending only the changes since last update ( currently the whole model is send on each update)

- Getting frequent updates for some information. Currently all information is updated with the same interval. But the job state of own jobs might be required more frequent.

Some other considerations:

- Feasibility of automated deployment of the user (vs. sysadmin) version of LLView

- The role (if any) of the (Java) proxy in mediating between LLView and the client

A description of the LLview data access tool llview_da and some remarks about integrating it into PTP can be found here LLview data access tool.

Remarks on Control Design and its Relation to Monitoring

While it will be necessary to maintain backward compatibility with the current Resource Managers, at least through a transition period, we will eventually want to port all existing managers to the new design. The following remarks focus specifically on what the control part of the new RM design may look like for non-debugging jobs (the discussion of how to manage a debug job is for the moment deferred).

Model Definition

First, the decision to adopt LLView for the monitoring part of the RM functionality has the following consequences:

- The "machine/queue" part of the current model (see Runtime Platform) will be replaced by LLView's XML scheme and protocols; see New Model Structure for LML integration;

- Support for monitoring a new DRM will involve adding the necessary modules to LLView, in addition to any PTP-specific configuration;

- Support for launch and cancellation can in theory now be separated cleanly from the monitoring and will constitute a distinct part of the Resource Manager description (some connection, however, must exist between job status and the control commands, particularly to cancel a job; more of this below).

This leaves the question of job attribute definition. Elsewhere on these pages it is stated that:

PTP takes the approach that the user should be spared from needing to know about specific details of how to interact with a remote computer system. That is, the user should only need to supply enough information to establish a connection to a remote machine (typically the name of the system, and a username and password), then everything else should be taken care of automatically. Details such as the protocol used for communication, how files are accessed, or commands initiated, do not need to be exposed to the user.

While this desideratum is reasonable, it is unfortunately difficult to realize with respect to the actual configuration and launching of jobs through batch systems, since there are often no mechanisms for dynamic discovery of information about their configuration, subject as that is to the vagaries of system administration. Grid interfaces for this kind of discovery do not guarantee that such mechanisms or protocols will indeed be implemented at any given site.

The current event-driven model for definition of job attributes is to this extent redundant, especially in light of the fact that a proxy such as the one for PBS must be told about these attributes from a configuration file, and that file in turn needs to be built up in terms of the specifics of the PBS system on that resource (by no means entirely standardized). Hence, the user is going to have to know something about this machine configuration in advance in order to choose (or create) the proper PTP RM configuration file.

Some sites and user communities solve this problem by providing shared configuration information, often through a common repository or database. It makes a good bit of sense for PTP to adopt this point of view; that is, assume that the user needs to select (if not actually customize) the proper configuration for the resource. Standard examples could be provided for the various resource managers/DRM systems in the PTP distribution, and if the user is accessing a system where LLView is installed in administrative mode, then perhaps that configuration would be made available from an agreed-upon URL in connection with that installation. PTP could also provide for opening such configuration files in an XML editor, or perhaps one or more wizards to aid in their manipulation.

Resource Manager XML Configuration

See now the proposed annotated XSD for Resource Manager configuration at: PTP/designs/resource_manager_xsd.

If the goal is to provide a single XML file for configuring any given Resource Manager type/instance, this description would need to be a superset of the LLView schema for monitoring (see above). Configuration of the LLView client would then depend on extracting the node of the XML tree corresponding to the LML for this batch system.

The control part of the configuration would involve a description of the valid job attributes and other settings specific to the building of a valid launch command. These attributes would be typed and could serve to build the UI tab. The user should be able to choose what subset of these attributes will be displayed, and this should be modifiable within the launch tab without having to reconfigure the Resource Manager itself.

It may be desirable to generalize the internal representation of these attributes such that the attributes associated with a given scheduler or batch system are mapped to it; it may further be useful to adopt a standardized or existing schema for this purpose. Two such schemas we may want to consider:

- DRMAA. See www.drmaa.org; see also the reference implementation by Sun's Grid Engine;

- SSS Job. See Cluster Resources Javadocs and Job Object Specification.

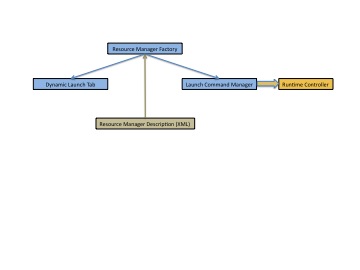

In order to realize the goal of adding a new Resource Manager with little or no additional coding, something like the following abstraction will be necessary (refer also to Normal Launch):

The factory would be responsible for instantiating two classes from the XML configuration, one of which is the concrete Launch Tab for display in the Run Configuration, the other a module, either composed into or called by the Runtime Controller, which would be responsible for converting the Launch Tab information into the correct command flags and for writing out or staging any necessary files (such as the actual batch script) before the submit command it called. The Launch Command Manager would also implement the necessary cancellation command construction.

The idea here would be to provide a shell class for both the Launch Tab and the Launch Command Manager, which would accept one or more beans constructed from the XML (using, for instance, JAXP). The mapping from attribute name to flag or variable would be entirely described by the XML, which would need to provide for the option of whether there will or will not be a batch script to stage.

The advantage of using a standard such as DRMAA or SSS Job is that it will be unlikely that we will need to modify the structure of the XML schema when we encounter a new DRM.

The Role of the Proxy

Since the brunt of the work currently done by the server-side (remote) proxy will be taken over by the LLView server, it remains to be seen whether we need a separate control proxy or not.

Advantages

- In the abstract, a proxy seems more scalable with respect to client interaction in the case of multiple jobs launched to the same resource; in particular:

- fewer open connections need to be maintained;

- the forwarding of stdout/stderr events could be batched;

- There would be no need to stage a batch script (could be created by the proxy).

Disadvantages

- In terms of the HPC system, this solution would appear less scalable, inasmuch as every user would be launching a proxy, which could cause load problems on the login nodes of a system;

- In the case of batch (rather than interactive) mode, a persistent proxy seems unnecessary at least from the standpoint of the submit command, which is largely "fire and forget" (see below for stdout/stderr capture and job id mapping in this case).

The absence of a proxy would greatly enhance the maintainability of the code; a proxy, furthermore, is not strictly necessary for the provisioning of stdout/stderr to the user or for solving the mapping of the job id to the client's view of the job.

Managing Stdout/Stderr

In the case of an interactive launch, stdout/stderr can be captured directly across the remote connection.

In the case of a batch launch, the client could simply capture the stdout/stderr file paths given on the job description, and when the status changes to running, could allow the user through a command/action to connect to that file.

- Caveat: the user needs to set the paths to somewhere on the login/head nodes, as most HPC systems do not allow inbound connections to the compute nodes.

Mapping Job Id to Client Id

Currently, the client provides a randomized id to the proxy, and the proxy is responsible for telling it (via an update event) what that corresponds to in terms of the scheduler.

The PBS Java proxy currently does this by parsing the stdout of qsub; all Java proxies, in fact, will be reliant on the shell/script commands for submission to return this id. This is a weakness with the non-native implementation, which cannot take advantage of an internal API call to the scheduler to obtain this information. But given that such a compromise must be made when using a Java proxy, it does not seem unreasonable to expect that the same information (i.e., the batch id) be obtained from an SSH-initiated invocation of the submit command. The XML configuration could contain instructions (perhaps a regular expression) for how to parse the stdout to obtain the batch id. The client itself could then map its internal id to that id.

Outstanding Issues

If we can agree upon the approach outlined above, the next step will be to come up with a preliminary design (API) for the control part of the Resource Manager.

- Another issue we may want to address first: should each RM have a batch/interactive mode switch, with some (e.g., OpenMPI) disabling one of the two? or should there be, for instance, PBS-batch and PBS-interactive RMs as separate implementations (with separate config files, etc.)?

Existing Resource Managers

PE and LoadLeveler

The existing PE resource manager and LoadLeveler resource manager rely on their proxies to provide attribute information to build the resource tab in the run configuration dialog. These resource managers are described here PTP/designs/current_PE

OpenMPI

The existing OpenMPI resource manager sends commands directly to the remote system via an ssh connection. This resource managers is described here PTP/designs/current_OMPI