Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

MaturityAssessmentReports/20140523

This is a quick report of the discussions held between Gaël Blondelle and Boris Baldassari on the 23rd of May 2014.

Introduction

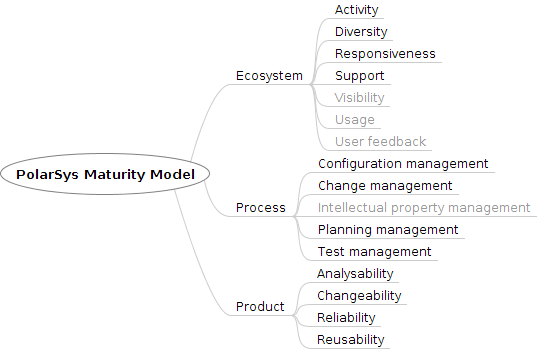

During the last iteration, the Maturity Assessment group identified a number of quality requirements, both for Eclipse and PolarSys, and from there the group agreed on a quality model to assess maturity. This model defines three major components (Product, Process, Community), which themselves are composed of various quality attributes (Reliability, Maintainability, Activity, Support..).

The next step is to develop this quality model and communicate its features so people and projects adopt it on a large scale.

To achieve this, we will mainly rely on Bitergia's excellent dashboard and build upon the data on hand to compute and present its equivalence in the quality model.

Prototype Goals

The first iteration objective is to produce a proof of concept prototype including the following features:

- Visualisation of the PolarSys quality model definition

- Decomposition into quality attributes

- Decomposition of quality attributes in computable metrics

- Visualisation of a static version of the quality model implemented for a specific project

Goals for further iterations will include:

- Integration with Bitergia as a data source

- Integration of quality assessment results in the Bitergia dashboard

- Customization of criteria weights for PolarSys members

Roadmap

- First, we need to have a second look at the quality model, and perhaps improve it, adding or removing quality attributes, or modifying how quality attributes are computed from the metrics.

- Confirm the common agreement on this quality model with the PolarSys group.

- Establish a list of needed metrics, and where they can be gathered from. Data providers are Bitergia's dashboard (for all SCM, Communication and Tickets stuff), Sonar, and the rule-checking tools: Findbugs, Checkstyle and PMD. Some information may need manual reporting as well (e.g. deployments).

- Develop tools -- The tools prototype is available on GitHub here.

- Setup a visualisation of the quality model structure. This will be used to communicate on our work and help people understand how we define quality.

- Setup a toolchain to compute quality attributes from the metrics established in the first step.

- Build a visual presentation of the quality model results, as computed for a sample project.

- Evangelise

- Help projects setup their maturity assessment process.

Notes:

- Gather data from Bitergia's dashboard, Sonar, and rule-checking tools.

- Visualization of the quality model structure and of project's assessment with D3js.

- Data files will be stored in JSon format.

Data retrieval

Data can be retrieved from Bitergia's dashboard through json:

- evolutionary:

- all projects scm (commits) evolutionary: http://dashboard.eclipse.org/data/json/scm-evolutionary.json

- all projects its (bug tracking system) evolutionary: http://dashboard.eclipse.org/data/json/its-evolutionary.json

- all projects mls (mailing lists) evolutionary: http://dashboard.eclipse.org/data/json/mls-evolutionary.json

- all projects scr (code reviews) evolutionary: http://dashboard.eclipse.org/data/json/scr-evolutionary.json

- static:

- all projects scm (commits) static: http://dashboard.eclipse.org/data/json/scm-static.json

- all projects its (bug tracking system) static: http://dashboard.eclipse.org/data/json/its-static.json

- all projects mls (mailing lists) static: http://dashboard.eclipse.org/data/json/mls-static.json

- all projects scr (code reviews) static: http://dashboard.eclipse.org/data/json/scr-static.json