Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

EclipseQualityModel

We use the following quality model for the assessment of project maturity. It should be stressed that this model has been intensively discussed with PolarSys projects on the polarsys-iwg mailing list, and is the result of a common agreement among people involved. Nevertheless all feedback, comments, modifications, and remarks are welcome: check the PolarSys mailing list for any question.

Quality requirements have been identified in another page: Eclipse Quality Requirements. The overall architecture for the implementation of the measurement and analysis process is described in MaturityAssessmentToolsArchitecture. Concepts are described in EclipseMeasurementConcepts and metrics are described in EclipseMetrics.

Contents

Introduction

Intent

Quality models are useful if they are understood by users, both in their constitution and intent, because:

- The intent of a measure gives hints on how to understand and interpret results. This somehow prevents the counter-use of metrics.

- Software quality concerns are at the very heart of what the developers, and the foundation, are doing. In some contexts (or projects) this may be a very delicate subject to deal with.

- Critics are easy, especially in this very domain: software engineering is not mature enough to have certitudes. As a consequence a common agreement has to be found with users to really get the most out of such a work.

Why a specific quality model?

There are plenty of software quality models, from McCall and Boehm early quality models to the ISO 9126 and ISO SQuARE (25xxx series) standards for products, and ISO 15504/SPICE or CMMi for processes. Since quality varies according to the domain (safety, testability or usability do not always have the same importance in a software product) some quality models have been published for specific domains like HIC for the automotive, DO-178 for aeronautics, ECSS for space. The model we intend to use needs to be adapted because:

- Embedded systems share some characteristics, but may really differ according to their application.

- Eclipse (and thus Polarsys) is a free software organisation, and open-source projects have specific concerns, both in quality and in metrics availability. As examples, we may cite:

- Maintainability has a strong importance since the project is intended to be modified by anyone.

- Contributions may not be consistent in conventions, patterns used, or process followed. It may introduce some bias in measurement.

- Community-related attributes (health, activity, popularity?) should be included in the model.

Requirements for building a quality model

First, check the "About metrics" section of the Eclipse metrics page.

Besides this, the quality measurement should be:

- Open and transparent, because on last resort quality comes from people themselves.

- Fully automated: don't rely on humans to gather and provide metrics, that's far too much bias. The extraction and analysis process should be fully automated.

According to Marc-Alexis Côté M. Ing et al. in Software Quality Model Requirements for Software Quality Engineering, a quality model shall meet the following requirements:

- It should be usable from top to bottom: users shall be able to understand how quality is decomposed down to metrics used.

- It should be usable from bottom to top: quality shall be assessed from the retrieved metrics up to the quality characteristics.

- It should include the five perspectives on quality as defined by Garvin.

Model overview

Model structure

The complete quality model is broken into three main parts:

- The core quality model, composed of a series of quality attributes. The list of attributes is described later in this page. They are defined in the

data/polarsys_attributes.jsonfile in the repository. - These quality attributes are mapped to some measurement concepts, like "activity of the user mailing list", "code size", and "control-flow complexity". See the list of concepts here. They are defined in the

data/polarsys_questions.jsonfile in the repository. - Measurement concepts are in turn mapped to one or more metrics, e.g. "activity of the user mailing list" is measured through the number of posts on the user mailing list, "code size" is measured through source lines of code, and "control-flow complexity" is measured through cyclomatic complexity. See the list of metrics here. They are defined in the

data/polarsys_metrics.jsonfile in the repository.

The whole structure (i.e. links between all attributes, questions and metrics) are defined in the data/polarsys_qm.json file in the repository.

While the quality attributes and measurements concepts are not supposed to change (excepted for the evolutions of the measurement process), quality metrics may change according to some specific characteristics, like the programming language used and the availability of data.

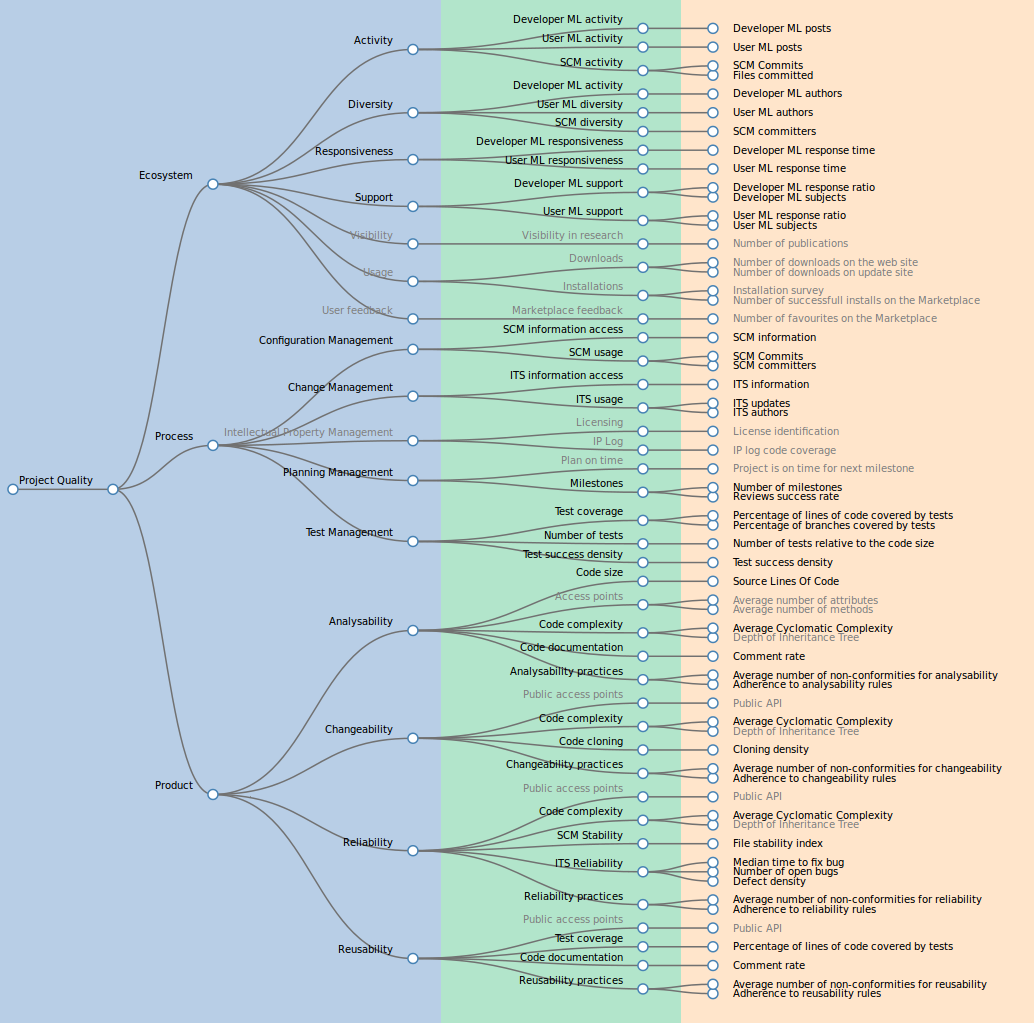

The following picture shows the full quality model, from quality attributes to measurement concepts and metrics. Quality attributes are on the left (blue background), measurement concepts are in the middle (green), and metrics are on the right (salmon-like orange).

Quality Attributes

Quality attributes are defined in the polarsys_attribute.json file; each attribute must have the following fields:

- name: the name used for display. It should not be too long.

- mnemo: the id used to build the full model and cross-reference attributes, concepts and metrics.

- description: a complete description of the attribute.

- type: set to "attribute"

- active: boolean (true|false) is the attribute in use now?

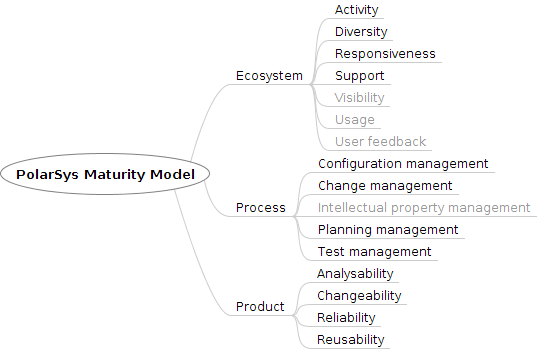

Following picture details quality attributes defined as of today. Attributes in grey are inactive.

The basic principle is to decompose quality into three main characteristics (product, process, ecosystem), as identified in the Eclipse quality requirements. These are themselves decomposed into subcharacteristics.

Eclipse Quality

- Product

- Analysability: degree of effectiveness and efficiency with which it is possible to assess the impact on a product or system of an intended change to one or more of its parts, or to diagnose a product for deficiencies or causes of failures, or to identify parts to be modified (ISO/IEC 25010).

- Changeability: degree to which a product or system can be effectively and efficiently modified without introducing defects or degrading existing product quality (ISO/IEC 25010).

- Reusability: degree to which an asset can be used in more than one system, or in building other assets (ISO/IEC 25010).

- Reliability: degree to which a system, product or component performs specified functions under specified conditions for a specified period of time (ISO/IEC 25010).

- Process

- Configuration Management: the degree of maturity of software configuration management usage.

- Change Management: the degree of maturity of change management usage.

- Test Management: degree of .

- Intellectual Property: how is managed intellectual property of contributions?

- Build and Release management: continuous integration, continuous testing, documentation of the build and release processes.

- Project planning: is the project predictable? milestones, reviews, release dates...

- Ecosystem

- Activity: amount of recent contributions to the project.

- Diversity: amount of different actors for recent contributions to the project.

- Responsiveness: how fast users get answers to requests (e.g. shortest time of reply in mailing lists).

- Support: how much information is available on requests (e.g. number of threads, number of answers per post in mailing lists).

- Visibility: do people talk about the software? Are there research articles, journal articles, and conference presentations?

- Usage: degree of usage of the project: is it widely used, by different people in different context?

- User feedback: How do the users of the software evaluate the project and product?

Please note that the quality attributes for the product part are mapped to the ISO 9126 quality model, and the process characteristics are borrowed from, and organised according to, the Capability Maturity Model Integration Process Areas.

How to get the model?

The first implementation of the process has been done in GitHub: http://borisbaldassari.github.io/PolarsysMaturity/

However as we made progress it was decided to put it in a private repository during the development. Some files (namely the quality model, attributes, questions and metrics) should also not be public. So from this date all new development work has been made in BitBucket: https://bitbucket.org/PolarSys/polarsysmaturity

All definition files use the json format, and are located in the /PolarsysMaturity/data directory:

- polarsys_qm.json is the reference file for the quality model description. It includes the structure of the quality model up to the metrics used, and references all items (attributes, questions, metrics) by their mnemo.

- polarsys_attributes.json details all quality attributes used in the quality model.

- polarsys_concepts.json details all questions (measurement concepts) used in the quality model.

- polarsys_metrics.json details all metrics used in the quality model.

The repository also has a bunch of utility scripts, to check data files for inconsistency, format them in a readable format, or parse output of rule-checking scripts.

Since the repository is not public, you will need to ask access to someone. The polarsys-iwg mailing list is the right place for that.

References

See also the Maisqual wiki for more information on metrics, papers, and quality models.