Notice: this Wiki will be going read only early in 2024 and edits will no longer be possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

Difference between revisions of "SMILA/Documentation/Architecture Overview"

(→Introduction) |

|

(No difference)

| |

Revision as of 09:34, 24 January 2012

What is SMILA?

Introduction

SMILA is a framework for creating scalable server-side systems that process large amounts of unstructured data in order to build applications in the area of search, linguistic analysis, information mining or similar. The goal is to enable you to easily integrate data source connectors, search engines, sophisticated analysis methods and more and gaining scalability and reliability out-of-the-box.

As such, SMILA provides these main parts:

- JobManager: a system for asynchronous, scalable processing of data using configurable workflows. The system is able to reliably distribute the tasks to be done on big clusters of hosts. The workflows orchestrate easy-to-implement workers that can be used to integrate application-specific processing logic.

- Crawlers|: concepts and basic implementations for scalable components that extract data from data sources.

- Pipelines: a system for processing synchronous requests (e.g. search requests) by orchestrating easy-to-implement components (pipelets) in workflows defined in BPEL.

- Storage: concepts for integrating big-data storages for efficient persistence of the processed data.

Eventually, all SMILA functionality will be accessible for external clients via an HTTP ReST API using JSON as the exchange data format.

As an Eclipse system, SMILA is built in OSGi and makes heavy use of the OSGi service component model.

Architecture Overview

Download this zip file containing the original PowerPoint file of this slide.

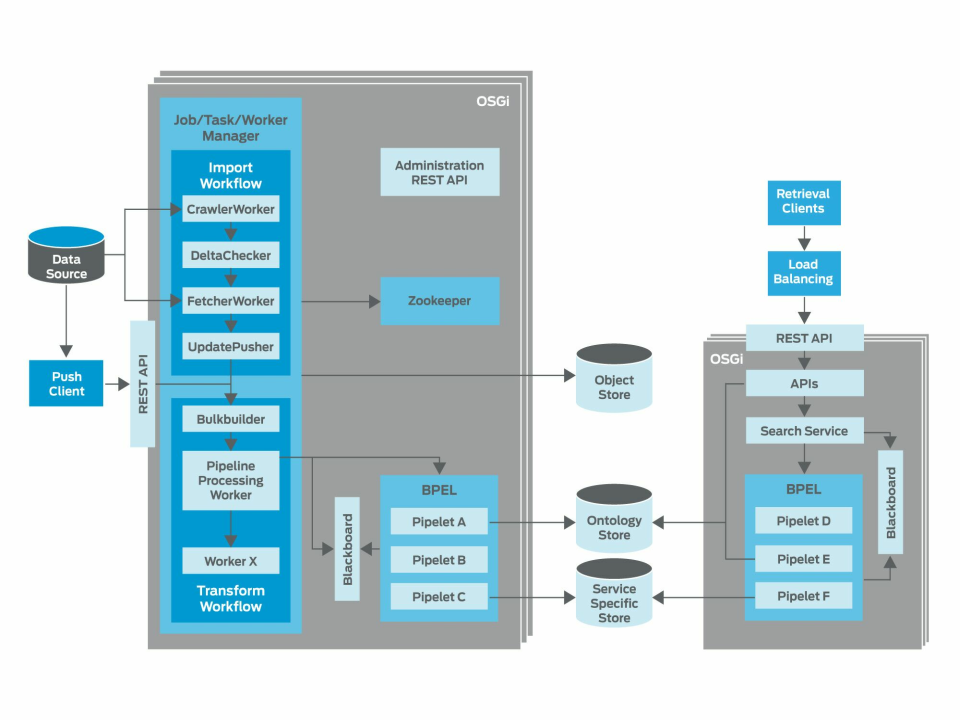

A SMILA system usually consists of two parts:

- First, data has to be imported into the system and processed to produce an search index or an ontology or whatever can be learned from the data.

- Second, the learned information is used to answer retrieval requests from users, for examples search or ontology exploration requests.

In the first process usually some data source is crawled or an external client pushes data from the source into the SMILA system using the HTTP ReST API. Often the data consists of large number of documents (e.g. a file system, web site, or content management system). To be processed each document is represented in SMILA by a record describing the metadata of the document (name, size, access rights, authors, keywords, ...) and the original content of the document itself.

To process large amounts of data, SMILA must be able to distribute the work to be done on multiple SMILA nodes (computers). Therefore the bulkbuilder seperates the incoming data into bulks of records of a configurable size and writes them to an ObjectStore. For each of these bulks the JobManager creates tasks for workers to process such a bulk and produce other bulks with the result. When such a worker is available it asks the TaskManager for tasks to-do, does the work and finally notifies the TaskManager about the result. Workflows define which workers should process a bulk in what sequence. Whenever a worker finishes a task for a bulk successfully, the JobManager can create follow-up tasks based on such a workflow definition. In case a worker fails its task (because the process or machine crashes or because of network problem) the JobManager can decide to retry the task later and so ensure that the data is processed even in error conditions. The processing of the complete data set using such a workflow is called a job run and monitoring of the current state of such a job run is easily possible via the HTTP ReST API.

JobManager and TaskManager use Apache Zookeeper to coordinate the state of a job run and the to-do and in-progress tasks over multiple computer. So the job processing is distributed

To make implementing workers easy, the SMILA JobManager system contains the WorkerManager that enables you to concentrate on the actual worker functionality without having to worry about getting the TaskManager and ObjectStore interaction right.

To extract large amounts of data from the data source, the asynchronous job framework can also be used to implement highly scalable crawlers. Crawling can be divided into several steps:

- getting names of elements from the datasource

- checking if the element has changed since a previous crawl run (delta check)

- getting the content of changed or new elements

- pushing the element to a processing job.

These steps can be implemented as seperate workers, too, so the crawl work can be parallelized and distributed quite easily. By using the JobManager to control the crawling we gain the same reliabilty and scalability from the processing for the crawling, too. And: Implementing new crawlers is just as easy as implementing new workers.

Eventually, the final step of such asynchrounous processing workflow will write the processed data to some target system, for example a search engine or an ontology manager or a database where it can be used to process retrieval requests, and so we get to the second part of the system. Such requests are coming from an external client application via the HTTP ReST API. They are usually of a synchronous nature, meaning that a client sends a request and waits for the result to present it to a user, and it expects the result to be produced rather quickly. On the other hand we want to have a similar flexibility to configure the processing of such synchronous requests as we have for the asynchronous job processing. Therefore we use a different workflow processor here which is based on a BPEL engine. The BPEL workflows (which we call pipelines) in this processor orchestrate so-called pipelets to perform the different steps needed to enrich and refine the original requests and to produce the result. Implementing such a pipelet is probably even easier than implementing a worker ;-)

Finally, it's even possible to combine both workflow variants because there is a PipelineProcessing worker in the asynchronous system performs a task by executing synchronous pipeline. So it's possible to implement a only pipelet and have the functionality available in both kinds of workflows. Additionally, there is a PipeletProcessing worker available that executes just a single pipelet and so saves the overhead of the synchronous workflow processor if one pipelet is sufficient to execute tasks.

Want to know more?

For further up to date documentation of all implemented components please see:

- See SMILA in action: SMILA in 5 Minutes

- Read the Manual