Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

Hudson-ci/Planning/Better Build Pipeline Orchestration

| Hudson Continuous Integration Server | |

| Website | |

| Download | |

| Community | |

| Mailing List • Forums • IRC • mattermost | |

| Issues | |

| Open • Help Wanted • Bug Day | |

| Contribute | |

| Browse Source |

|

Planning: Better Build Pipeline Orchestration |

|---|

The problem

One of our major problems with Hudson is the coordination and grouping of multiple jobs. While a lot can be done with the various plug-ins such as join-plugin,parametized-trigger-plugin etc. there are still some gaps in the functionality because hudson as a whole has no concept of group of jobs.

In order to illustrate our problem, please consider the following setup.

- We have one job which does the actual compile of the project and creates the deployable artifacts.

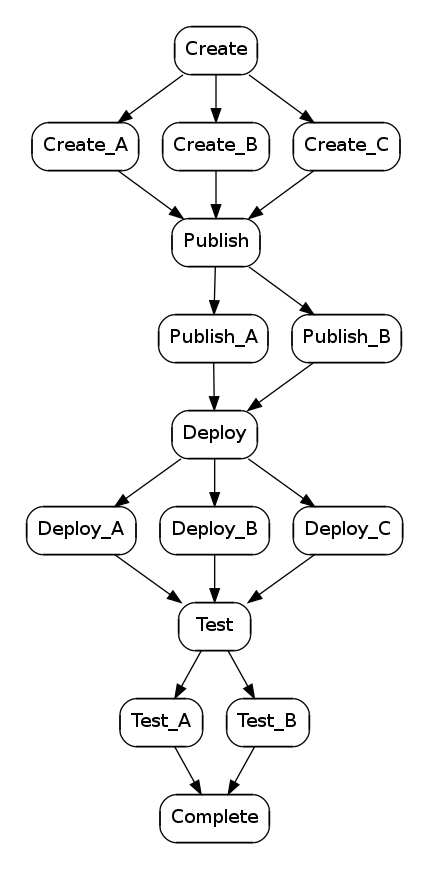

- For each environment we have a series of jobs for deploying and testing the build. See the graph for the job structure, jobs on the same line run in parallel.

- The Create job has a timer trigger of once per night in order to recreate the weblogic domains.

- The publish job has a timer trigger of once per hour to deploy the newest code.

It is the publish job which is causing the most problems from a job coordination point of view. It does not have a understanding of the chain of jobs which it triggers,so our only option is to set the timer trigger such that there is enough time for the chain of jobs to successfully run. This has two problems.

- If the chain of jobs fail early there is no ways for Hudson to see this and trigger a new run of the pipeline (a new run of publish)

- If someone manually starts a new publish he has to temporally disable publish so the hourly run does not trigger, otherwise the two series of jobs will step on each others toes.

We have tried to use the block on upstream/downstream but that is not sufficient to solve the problem.The problem with that approach is that the two series of jobs will interleave cauiing unpredictable results. The interleaving problem can for example occur if

- "Deploy_B" is running

- "Publish" is triggered (queue now has Publish)

- "Deploy_B" finishes and triggers "Test" (queue now has Publish,Test)

- "Publish" runs and triggers "Publish_A" and "Publish_B" (queue now has Test,Publish_A,Publish_B)

- "Test" runs and triggers "Test_A" and "Test_B" (queue now has Publish_A,Publish_B,Test_A,Test_B)

How much interleaving there is depends on the block upstream/downstream settings but I have not been able to fund a combination which results in a predictable "first pipeline finished first" behaviour.

Suggested solution

The initial work to solve the problem might be as simple as creating a blocker which is pipeline aware only allowing the job to run if the previous chain of jobs has completed.This way the publish job could have a trigger of every ten minutes, but it would just sit there waiting in ready state until the previous chain has finished.

A better (long term) solution would be to clean up the triggering and chaining parts, since there is now a multitude of plug-ins trying to complete the functionality and support each other. I would make the passing of parameters a first class options in all triggering mechanism, first class support for diamond shape job chains, and lastly build quality levels.

For the long term I would suggest

- Make parameter passing integrated with the core triggering functions, eliminating the need for "paramatetized-trigger-plugin"

- Make the join plugin use the new parameter functionality.

- make the join plugin support waiting for jobs "further down the chain" instead of as it is now, just the immediate jobs.

- Make the build promotion a integrated feature, leaving the "actions" part of the build-promotion-plugin as plugins. This will also naturally clean up the action list a lot.

- Make the build promotion have a notion of "level" instead of just being a list of named promotion labels. make the log rotator be aware of promotion levels so that we can make it only keep x number of builds of a given promotion and remove all older which did not receive the promotion.

The Job Graph

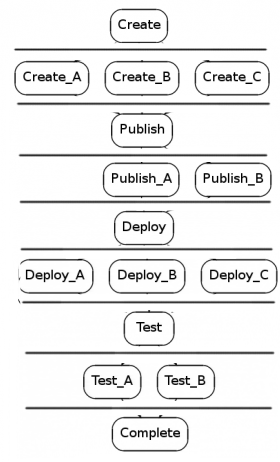

Job Pipeline

This is the same diagram in pipeline representation. The entire drawing is a single pipeline with pipeline stages arranged vertically and jobs (or other pipelines) within a stage arranged horizontally. Execution of the pipeline begins at the first (top) stage and proceeds sequentially to the last (bottom) stage. Jobs (or other pipelines) in each stage execute in parallel.