Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

SMILA/Specifications/Management Of The Smila Components

Contents

Use case

Some Smila components have to be managed.

The Lucene index should be managed by means of JMX agent. Operations for deleting, renaming and creating indexes should be accessible. For XMLStorage operations for deleting and renaming partitions should be accessible.

This article is written on the basis of discussions with Dmitriy Hazin and Ivan Churkin.

Description

Let's discuss a problem on an example of management of an index.

See Workflow Smila.

The core of the SMILA system – consisting of Router -> JMS queue -> Listener -> BPEL processor - works with Record objects. Or in other words the record connects all components of the system.

The Router pushes the record in the JMS message queue ( ActiveMQ). The records are collected in the queue where they await further processing. The Listener orders the queue and invokes the respective pipeline for each record. Currently Smila has 2 pipelines: The AddPipeline and the DeletePipeline, which invoke several services and one pipelet to process a record.

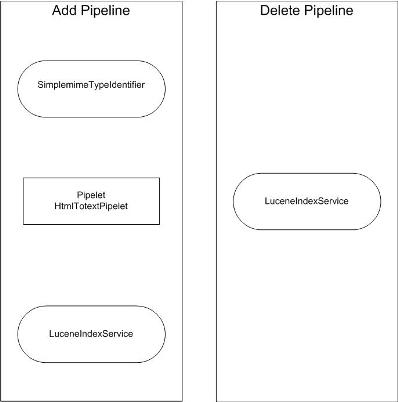

Figure 1. Add pipeline (see addpipeline.bpel). Delete pipeline (see deletepipeline.bpel).

As shown in Figure 1, the AddPipeline invokes the SimplemimeTypeIdentifier service and the pipelet HtmlTotextPipelet which prepare the record for adding it to an index, and then the LuceneIndexService which directly accesses the index for the adding of the record to the index.

The DeletePipeline invokes the LuceneIndexService for deleting a record from the index.

LuceneIndexService accesses an index by means of two methods:

private void addRecord(final Blackboard blackboard, final Id id, String indexName) ... private void deleteRecord(final Id id, String indexName) ... IndexConnection indexConnection = IndexManager.getInstance(indexName);

Thus the system has no direct reference to the Lucene index implementation as such. All indexing operations are carried out by calling methods of the LuceneIndexService.

On this basis there are two ways of implementation of the index management:

- Natural way - Management by pseudo records which contain the index command.

- Surgical way – Direct Lucence API access by the LuceneIndexService.

Technical Proposal

Management by the pseudo records

A pseudo record does not contain any data apart from the index command as part of its meta-data. Its sole purpose is to tell the LuceneIndexService which operation is required: deleting, renaming or creating of an index.

The possible realization

Create an additional pipeline “IndexManagementPipeline” to send pseudo records to. For this pipeline

- Create a set of pipelets, one for each operation

or

- Invoke LuceneIndexService directly from the “IndexManagementPipeline”. In this case the LuceneIndexService has to be enriched with a new method for each of the required operations

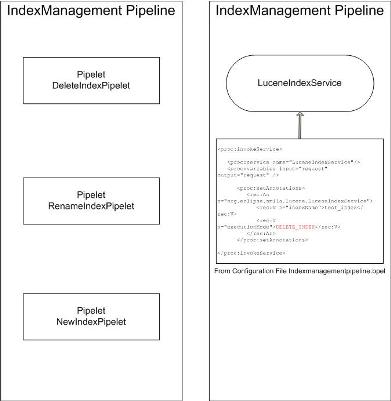

Figure 2. Posible realization IndexManagementPipeline.

Configuration file for IndexManagementPipeline indexmanagementpipeline.bpel can consist:

<proc:invokeService> <proc:service name="LuceneIndexService" /> <proc:variables input="request" output="request" /> <proc:setAnnotations> <rec:An n="org.eclipse.smila.lucene.LuceneIndexService"> <rec:V n="indexName">test_index</rec:V> <rec:V n="executionMode">DELETE_INDEX</rec:V> </rec:An> </proc:setAnnotations> </proc:invokeService>

Advantages of the given approach

- A new mechanism for the execution of the index commands is not necessary

(This is especially important for the distributed system)

- The history of commands is easily maintained.

Directly by the LuceneIndexService

We have to accept that the LuceneIndexService has to implement new methods: deleteIndex(), renameIndex() and createIndex().

Directly invoking the LuceneIndexService will also lead to a desirable result. However, a solution in this fashion requires surgical interference with the system (requirement to implement new methods) and cannot be considered correct. The requirement for new functionality would always require changes to the API of the system. The direct approach does not use the possibilities of the SMILA system and does not allow to control the index operations in the standard way.

See also Command Pattern

Solution choosen

It was decided to use standard management agents. Exactly like in the crawler controller management.