Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

SMILA/Documentation/Default configuration workflow overview

This pages given a short explanation of what happens behind the scenes when executing the SMILA in 5 Minutes example.

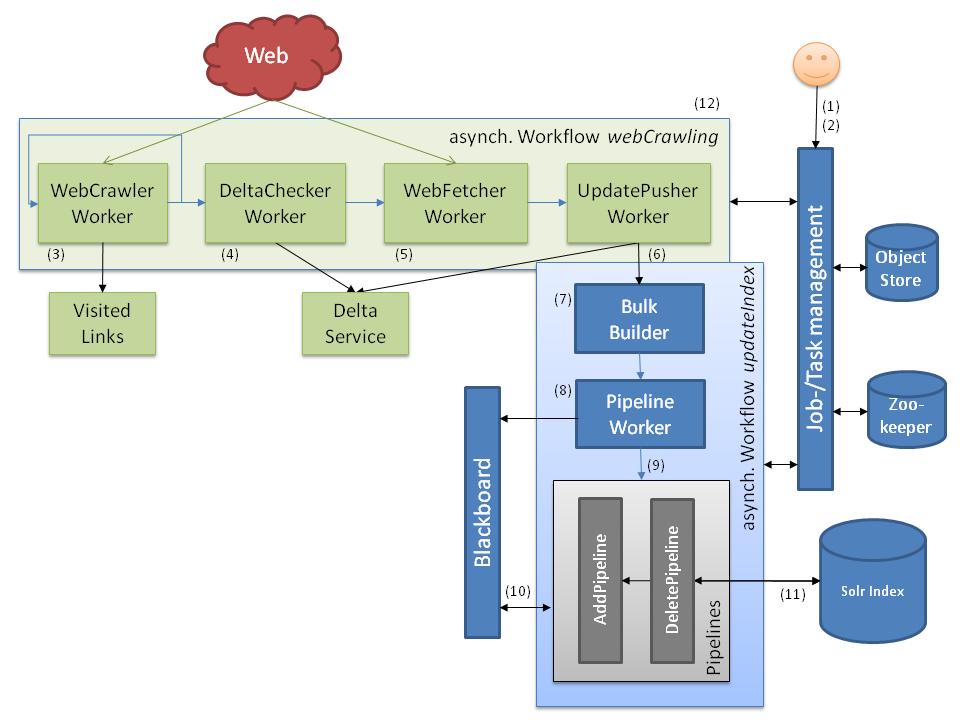

(download this archive to get the original Powerpoint file of this diagram)

When crawling a web site with SMILA the following happens:

- The user starts a job with workflow updateIndex. Nothing else happens yet, the job waits for input to process.

- The user starts a job with workflow webCrawling in runOnce mode.

- The WebCrawler worker initiates the crawl process by reading the configured start URL. It extracts links and feeds them back to itself, and produces records with metadata and content. Additionally it marks links as visited so that other crawler worker instances will not produce duplicates.

- The DeltaChecker worker reads the records produced by the crawler and checks in the DeltaService if the crawled resources have changed since a previous crawl run. Unchanged resources are filtered out, only changed and new resources are sent to the next worker.

- The WebFetcher worker fetches content of resources that do not have content yet. In this case this would be non-HTML resources because their content was not needed by the crawler worker for link extraction.

- At the end of the crawl workflow, the UpdatePusher worker sends the crawled records with their content to the indexing job as added records and saves their current state in the delta service.

- Now the indexing job starts to work: The Bulkbuilder writes the records to index to bulks, depending on if they are to be added to or updated in the index, or if they are to be deleted (which does not happen at this point).

- The PipelineProcessor worker picks up those record bulks and puts each record (in manageable numbers) on the blackboard ...

- ... and invokes a configured pipeline for either adding/updating or deleting records.

- The pipelets in the pipelines take the record data from the blackboard, transform the data, extract further metadata and plain text ...

- ... and manipulate the SolrIndex accordingly. The index can now be searched using yet another pipeline (not shown here).

- Finally (and not yet implemented), when the crawl workflow is done, the DeltaService can be asked for all records that have not been crawled in this run, so that delete records can be sent to the indexing workflow to remove these resources from the index.

All records produced in this are stored in the ObjectStore while being passed from one worker to the next. The Job/TaskManagement uses Apache Zookeeper to coordinate the work when using multiple SMILA nodes for parallelizing the work to be done.

Crawling a filesystem works similar, the "fileCrawling" workflow just replaces the "WebCrawler" and "WebFetcher" workers by "FileCrawler" and "FileFetcher" workers.