Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

Hudson-ci/use-cases/Cleo

Contents

Cleo’s Use of Hudson As a Continuous Integration Platform

By Stuart Lorber, Release Engineer, Cleo

LinkedIn: http://www.linkedin.com/in/stuartlorber

Introduction

Cleo is a provider of business integration software and services, enabling enterprises to connect with their customers and suppliers, and to integrate business processes and information with internal or cloud-based applications. Cleo employs approximately 80 employees. This includes a Product Development team of 27. Seven of the 27 are full time dedicated product testers.

The Challenge

Four years ago we started to include Agile and Continuous Integration techniques into our development processes to produce software with greater speed and fewer defects.

The build system we were using, a commercial product, was not capable of producing or maintaining the number of builds and artifacts we needed and did not offer scalability.

We evaluated a few products and decided to switch to Hudson because it was an open source product with a good reputation, a large install base and it was designed to support continuous integration.

Shortly after, we started to evaluate Jenkins as a replacement for Hudson because Jenkins was maintaining most of the Hudson plugins and appeared to have more community activity. We abandoned this idea after seeing that Jenkins’ core functionality was less reliable than Hudson’s. We also saw, at EclipseCon 2012, the commitment to maintaining and improving the Hudson product.

With the rewrite of Hudson for version 3 and particularly with the release of version 3.1 there is no comparison. The Eclipse Project commitment, plugin support, stability, team support and a huge user community made it the clear choice.

Cleo’s Environment

We started with Hudson version 2.1 running on a server with 1 TB of storage with 15k RPM disk drives (Raid 0), 32 GB of RAM and dual hex-core processors.

Our initial system and job configurations addressed the building and testing of a new product offering.

This effort allowed us to have a reliable continuous build cycle that gave easy access to new builds and test results.

Team Implementation

We then started to research how to implement a way to quickly create a “branch of jobs” to match a code branch. We support multiple versions of each of our products so we need similar or identical sets of jobs for each product version.

In addition we need similar support for developers who will be implementing major changes or large features and ultimately need to merge the branch back into the main trunk.

Here’s an example:

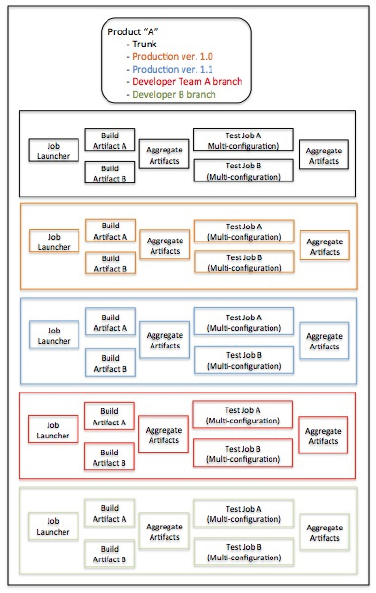

This is a simplified picture of the type of environment we need in order to support one product. In this example we have our trunk, several branches for previous product versions and several branches for developers.

In reality we have multiple products, more versions of each product and more complicated build flows.

Our “main” Hudson project has about 40 jobs, so manually copying / modifying / joining each job takes a long time and can be extremely error prone. We wanted the ability to provide a developer or a team of developers a “branch of jobs” within an hour. Once Hudson 3.x came out with the Team Management Concept and accompanying team copy feature we were able to copy the entire set of a team’s jobs within seconds. All references important to us are updated so the only additional task to provide a working set of jobs to developers is to update the SVN locations and credentials. The entire process takes about an hour.

Hardware Configurations

Our original Hudson server was running Linux and did all processing for Linux-based jobs. Windows jobs were processed on VirtualBox “Virtual Machines” that were configured as Hudson nodes running on this same server.

As our processing load increased we started to use standalone PCs as slaves that were configured as Hudson nodes. We soon realized that this gave us more flexibility to increase processing power. Our slaves started out as low-end 64-bit PCs with a minimum of 4 GB of RAM. We installed Windows or Linux, as appropriate.

When we reevaluated our server needs we decided that we should focus on a machine with a large amount of reliable storage (Raid 10). Disk and processor speed were secondary although we still have a dual hex core machine with 32 GB of RAM. The server was configured with 7,200 RPM drives based on price and effective throughput on a gigabit switch. Our entire build environment is on its own switch to facilitate fast transfer of artifacts between the master and slaves

We have all processing done on slaves and use the server to run Hudson and store artifacts. This allowed us to spend less money on a server and extend its life by not requiring a server upgrade as our processing needs increase.

We also decided that our slaves will be relatively inexpensive “off the shelf” PCs that can be purchased and brought online quickly. The current configuration for a slave is a Dell PC with an Intel Core i7 processor, 32 GB of RAM and dual SSD drives. (Except for our increasing the RAM to 32 GB this is one of the standard Dell configurations.)

We decided that using a cloud solution like Amazon’s Elastic Compute Cloud (EC2) is not going to work for us at this time because of the large amounts of data we need to move to and from slaves.

Examples of the amount of data we would need to transfer include:

- Build artifacts - ~15 GB. This does not include about 100,000 files to generate a build artifact.

- Installers - ~ 4 GB.

- Test jobs - ~ 15 GB. This includes moving artifacts to a slave that are tested as well as any test results that are returned by the test jobs.

- Aggregator jobs - ~10 GB. This includes all of the upstream jobs that are included in a particular build’s job flow.

- An ISO image - ~10 GB. This includes all of the artifacts to be included in the ISO as well as the ISO image.

On average, we move more than 80 GB of data to and from our slaves every day for our standard nightly build of our main Hudson project (including branches).

This does not include additional weekly jobs we run or build flows for our other products.

We also made the decision to run all of our jobs on VirtualBox VMs on these slaves rather than directly on the machines.

This helps us in several ways:

- Once a VM is created it can be cloned and moved to another machine. Therefore, we create “template” VMs for each of our build/test needs and we clone them as needed for load distribution. A clone is fully “pre-configured” for its function and the only change required is an update to point to a new node configuration on the Hudson server.

- We’re using “labels” as part of our node configurations so we effectively have slave pools. We point our job configurations to these pools rather than individual nodes. If we need more horsepower for a set of jobs we can clone a VM and it’s added to the pool. There’s no need to change a job configuration. If we remove a VM it is the same process; no change to a job configuration.

- We try to always have redundant clones in this type of configuration. In the event that a node goes offline job processing can still continue; there will be less bandwidth but job processing does not stop.

- We’re also distributing these cloned VMs across physical slaves. This is done so that if a physical slave crashes our processing can still continue.

- Using VMs we can order a new slave machine, install VirtualBox and copy VMs to the new slave, define nodes and have more horsepower available within hours.

- If we need to load balance one of our slaves we can just move the VM to a different slave. There’s no need to change any configuration on the VM or in Hudson node configuration.

VMs allow us to have more test environments than we have machines. Our products support all active versions of Windows (currently about 7) and several versions of Linux (currently 4 primary versions). With the use of VMs and multi-configuration jobs we can quickly change our job configurations to run on any variety of operating systems. It is critical to us to have this flexibility as we find differences in behavior between Windows and Linux and between versions of Windows and Linux. These differences used to be found through manual testing or by customers; now we find most of them during our automated test processes.

Our primary Hudson job runs approximately 5,000 unique tests nightly. This does not include the other products we have migrated to Hudson. Since we run the same tests on multiple operating systems we are running more than 13,500 tests nightly and the number is growing. Test creation and resulting test results are an integral part of our development process. At this point, a build without accompanying test results is considered almost useless.

We currently have 15 slaves. By the end of 2015 we’re planning to have approximately 18 slaves. Each slave runs 3 to 6 VMs so by the end of the year we will have a total of about 80 Hudson nodes.

We are currently evaluating using a cloud solution like Amazon’s Elastic Compute Cloud (EC2) to augment our slave farm to provide flexibility to add capacity and redundancy.

Processing Jobs

We are processing jobs a minimum of 12 hours per day. Our nightly build cycle takes approximately 10 – 12 hours to complete and we do continuous builds throughout the day to resolve test failures and to test code changes. On average we are processing jobs 16 to 18 hours per day.

The product that is currently using the most Hudson resources generates artifacts of between 250mb – 500mb. Since we support Windows, Mac and Linux a particular job might generate upwards of 5 GB of archived artifacts. With nightly builds, installers and ISO generation we generate approximately 20 GB of archived artifacts nightly. This number will be increasing as we migrate all of our builds to Hudson, support more versions of our products and have more developer branches. For builds, we keep a history of 10 to 20 jobs. For test jobs, we keep a history of 90 days to allow us to track test count and success / failure trends. This does not include promoted builds that are kept indefinitely.

Our Success Story

Our newest product required an updated interface to one of our existing products. Because of the move to Hudson, all of jobs are now associated with teams. So the steps involved to support this new team were to simply:

- Cut a branch in our source repository.

- Copy the “main” branch team using the copy plugin.

- Update SVN references to the branch, as appropriate, in the new team’s jobs.

All development work for this updated interface was done in the branch and all builds and test jobs were run against the branch using the new team job set until all test results matched our trunk.

At this point the branch was merged back into trunk.

The result was one test failure.

A traditional code merge from a branch into trunk usually results in compilation and testing errors that can take days to resolve. This results in disruptions to developers not involved in the branch development.

This major refactoring was done without any disruption to the developers still working against our trunk. After the successful code merge the team and its jobs were deleted.

The Hudson Team’s Success Story

The Hudson development team has been extremely responsive to product improvements as well as any reported bugs.

The product improvement that most affected our product development cycle and made Hudson the clear choice for our company is the “team” concept that allows multiple versions of the same product to be developed quickly and simultaneously. This solved our problem of being able to effectively build and test these multiple product versions. It also provides greater visibility, which has greatly improved our development velocity.

A list of improvements and bug enhancements include:

- Modifications to their team copy plugin to make branching and the associated team creation easier.

- Enabling/disabling of selected builders.

- Adding commenting to selected builders.

- Increased stability that allows the Hudson system to start even where there are certain types of data corruption.

- Upgrade of their AWS plugin.

Summary

Our “Success Story” is just one of many.

Before we implemented Hudson our development process was based on creating a build when we thought we needed it and then manually tested the build.

Now, Hudson drives our software processes.

- We have polling jobs that will inform developers of test failures within minutes of a source commit.

- We have a nightly build process that builds and tests our software and provides reporting on software quality.

Hudson has proven to be an invaluable part of our development processes. Migrating to Hudson has helped Cleo evolve its software development process from a traditional waterfall methodology to a flexible agile environment. We are now able to deliver significantly higher quality software across development sub-teams, our product testing group and, most importantly, to our customers.