Notice: This Wiki is now read only and edits are no longer possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

SMILA/Specifications/RecordStorage

Contents

- 1 Description

- 2 Discussion

- 3 RecordStorage Interface

- 4 RecordStorage based on JDBC database

- 5 RecordStorage based on relational database using eclipseLink

- 6 Serialization of Records

- 7 PoC Blackboard using RecordStorage instead of XMLStorage

- 8 Links

Description

As Berkeley XML DB will not be available in eclipse in the near future, we need an open source alternative to store record metadata. There is no requirement to use an XML database only, any storage that allows us to persist record metadata will suffice.

Discussion

prefer JDBC implementation of XS (KISS pattern)

- TM: As far as i know we only have a problem with Berkley DB XML (BDX) as long as it is a required and not just workswith dependency. My idea so far is to provide an implementation for XS (Xml Storage) that is based on a simple JDBC solution, like you have described urself on this page. By this we at least provide a working solution. When wanting to use SMILA for large scale application including full functionality in regard to XS then this is possible in the scope of Eccenca (CE) which then includes BDX.

I think such a path is fully legitimate and doesnt create too much work for us now such as implementing an JPA/EclipseLink implementation for XS including far reaching changes to core objects.- Daniel Stucky: Thanks for your feedback Tom. I will try to clarify some issues. The basic RecordStorage interface (with 4 methods) is all we need from the Blackboard point of view to access records. All access is done based on record Ids, no query support is needed. By introducing the RecordStorage interface instead of the currently used XMLStorage and XSSConnection interfaces we gain an abstraction layer that allows us to change the underlying implementation more easily. So you could use the already available XMLStorage implementation using Berkely DB inside a RecordStorageXmlImpl. Or we could create some JDBC or eclipseLink based implementations. I also propose to provide additional functionality for query support (be it XQJ, SQL or whatever) not in the RecordStorage interface but via separate interfaces, e.g. RecordStorageQuery. I guess that these interfaces will depend on the implementation technology used and will evolve over time, so limiting ourselves to just one interface seems a bad idea. Then the RecordStorageXmlImpl could also support XQJ queries via such a second interface, which in turn could be used by an XQJ crawler for mashup use-cases. The default implementation of a RecordStore would not need to implement any of those additional query interfaces.

- TM: Ahhh. I kind of missed the point that u wanted to add the RS Interface as an own layer. I was thinking: rename XSS Interface...

I think adding such an interface wont hurt much and i kind of like the idea of freedom that it gives to us.

In regard to the optional available interfaces: How would u go about getting those as a client? Would u try to- request a RecordStoreQuery OSGi service that might not be there, depending on ur config?

- cast the obtained RS Service to the RSQ Interface and see if that fails?

- Daniel Stucky: Did not think to deeply about this, but I would say definitely option 1). Thinking in Declarative Services a MashUpService would require a RecordStoreQuery service and it would no be able to be activated without one. Thus the whole mashup functionality would not be avilable at runtime. Of course one has to think about clean exception handling and so on, but that's my basic idea.

- TM: Ahhh. I kind of missed the point that u wanted to add the RS Interface as an own layer. I was thinking: rename XSS Interface...

- Daniel Stucky: Thanks for your feedback Tom. I will try to clarify some issues. The basic RecordStorage interface (with 4 methods) is all we need from the Blackboard point of view to access records. All access is done based on record Ids, no query support is needed. By introducing the RecordStorage interface instead of the currently used XMLStorage and XSSConnection interfaces we gain an abstraction layer that allows us to change the underlying implementation more easily. So you could use the already available XMLStorage implementation using Berkely DB inside a RecordStorageXmlImpl. Or we could create some JDBC or eclipseLink based implementations. I also propose to provide additional functionality for query support (be it XQJ, SQL or whatever) not in the RecordStorage interface but via separate interfaces, e.g. RecordStorageQuery. I guess that these interfaces will depend on the implementation technology used and will evolve over time, so limiting ourselves to just one interface seems a bad idea. Then the RecordStorageXmlImpl could also support XQJ queries via such a second interface, which in turn could be used by an XQJ crawler for mashup use-cases. The default implementation of a RecordStore would not need to implement any of those additional query interfaces.

JPA/EclipseLink vs. Performance

- TM: Having worked with Hibernate, which is another ORM Tool, i dont believe that EL/JPA is the way to go. IMO EL it is likely to create too much overhead, both in programming effort and performance.

From past experience with Hibernate i have learned that mass data handling is not the use case for ORM tools. They even say so in an Hibernate book, that one should look for other solutions, if mass data handling is the primary use case. The reason for this: by default ORM tools will create entity object graphs from the data stored in the DB and handle all reads/updates through these objects. This is VERY expensive. Of course it would be possible to do plain SQL under the hood in conjunction with DAOs, but that we could do also w/o the ORM tool anyway.- Daniel Stucky: I did set up a realy simple test comparing the current XMLStorage and my RecordStorage implementation, using some different settings. The test is a standard import of a filesystem source with 2500 xml files. The BerkeleyDB and the DerbyDB ran in process, wheras the Oracle DB ran on a remote server.

Storage runtime XMLStorage with BerkeleyDB ~ 8:00 min RecordStorage with Derby (no query attributes) ~ 6:30 min RecordStorage with Derby (with query attributes) ~ 7:00 min RecordStorage with Oracle (no query attributes) ~ 5:00 min RecordStorage with Oracle (with query attributes) ~ 5:15 min

Why JPA/EclipseLink ?

- TM: With my remarks about performance: Can u point out where u see the advantages of using EL/JPA in SMILA?

- Daniel Stucky: Ok, some reasons to chosse eclipseLink/JPA.

- in the long term we need to support relational databses as storage backends

- JPA is an open standard

- eclipseLink is an eclipse project, no license and CQ issues (Hybernate is no option because of license)

- database independent, easy switching by configuration

- also interesting technology for persisting delta indexing data

- we already use eclipseLink/PJA in ODE

- TM:

- > in the long term we need to support relational DBs as storage backends

- For Record/XML Storage? I wasnt aware of that! In what scenario? Why wont BDX do it?

So far I saw rel. DBs as 2nd best solutions where we put only little effort into.

- Daniel Stucky: Ok, some reasons to chosse eclipseLink/JPA.

RecordStorage Interface

Here is a proposal for a RecordStorage interface. It contains only the basic functionality without any query support.

interface RecordStorage { Record loadRecord(Id id); void storedRecord(Record record); void removeRecord(Id id); boolean existsRecord(Id id) }

RecordStorage based on JDBC database

At the moment all access to records is based on the record ID. The record ID is the primary key when reading/writing records. It would be easily possible to store the records in a relational database, using just one table with columns for the record ID (the primary key) and a second one to store the record itself. The record could be stored as a BLOB or CLOB:

- BLOB: the record is just serialize into a byte[] and stored as a BLOB

- CLOB: the record's XML representation could be stored in a CLOB. Extra method calls to parse/convert the record from/to XML needs to be applied when reading/writing the records (performance impact in comparison to using a BLOB). But this would offer some options to include WHERE clauses accessing the CLOB in SQL queries

Because the String representation of IDs can be really long, an alternative could be to store a hash of the String. (This hash has to be computed whenever accessing the database) In addition one could also add another column to store the source attribute of the record ID. This would allow easy access on all records of a data source to handle the use-case "reindexing without crawling"

For advanced use-cases (e.g. Mash up) query support is needed (compare XQJ), e.g. to select all records of a certain mime type. It would be possible to add more columns or join tables for selected record attributes. Another option is to do post processing of selected records, filtering those records that do not match the query filter. This is functional equal to a SQL select but of course performance is very slow.

When implementing a JDBC RecordStorage one should take care to use database neutral SQL statements, or make the statements to use configurable. A good practice could be to implement the reading/writing in DAO objects, so that database specific implementations of the DAOs could be provided to make use of special features. Most databases offer improved support for BLOBs and CLOBs.

A good choice for an open source database is Apache Derby. The Apache License 2.0 is compatible to EPL, the database has a low footprint (2MB) and can be used in process as well as in client/server network mode. It is also already committed to Orbit. For a productive environment it would be easily possible to switch to any other JDBC database, like Oracle.

RecordStorage based on relational database using eclipseLink

EclipseLink offers various options to persists Java objects. Below we go into detail about using eclipseLink with JPA (Java Persistence Api):

Overview on JPA

A mapping of Java classes to a relational database schema is created by using annotations in java code or providing an XML configuration. The classes to be persisted (called Entities) are in general represented by database tables, member variables as columns in those tables. There are some requirements to be met:

- an entity class must provide a non argument constructor (either public or protected)

- entity classes must be top level classes, no enums or interfaces

There exists two kinds of access types, where only one kind is usable per entity:

- field based: direct access on member variables

- property based: Java Bean like access via getter- and setter-methods

An Entity must have a unique Id, this can be either

- a simple key (just one member variable) (@Id)

- a composite key using multiple member variables. This implies the usage of an additional primary key class that contains the same member variables (same name and type) as the entity class (@Id + @idClass)

- an embedded key (@EmbeddedId)

Entities can have relations to other entities or contain embedded classes. Embedded classes are not entities themselves (but must meet the same requirements) and do not have a unique Id. They "belong" to the entity object embedding them. Version 1.0 of the JPA specification demands only support of one level of embedded objects. If more levels are supported depends on the implementation. Collections are also not allowed as embedded classes.

For more information see ejb-3_0-fr-spec-persistence.pdf.

PoC eclipseLink JPA RecordStorage using Oracle DB and Smila data model

- a JPA RecordStorage is always based on a concrete implementation of entities to persist. So it is not possible to implement a generic RecordStorage for any Record implementation but only for a specific implementation, in this case our default implementation RecordImpl, IdImpl , ...

- the classes of the Smila data model cannot be used as is, but have to meet the requirements of JPA (e.g. no argument constructor)

- the default implementation uses interfaces of other entity classes as types for member variables. These have to be replaced by concrete implementation types, for example

@Entity public class RecordImpl implements Record, Serializable { @EmbeddedId private IdImpl _id; ... }

instead of

@Entity public class RecordImpl implements Record, Serializable { @EmbeddedId private Id _id; ... }

- the Smila coding conventions implicate some issues: the names for tables/columns are automatically generated by JPA using class and member variable names. The leading _ used in Smila for member variables leads to invalid SQL statements (at least with Oracle). Therefore it is necessary to define the names for every tables, join tables and columns manually by using annotations or xml configuration

- the Smila objects (Records, Id, Attribute, Annotation) are all structured recursively and most make also use of Collections or Maps as members

- eclipseLink supports N levels of embedded objects

- recursive embedding of objects is NOT supported

- Collections/Maps of embedded classes are not supported in JPA 1.0 (will be supported in JPA 2.0). eclipseLink offers a so called DescriptorCustomizer which could be used to implement such a support (not considered in die PoC, needs further analysis)

- so it is not possible modeling the Smila classes as embedded objects, which would have been the most natural approach as all data belongs to a single record object

- as an alternative one could try to model the relations between the various data model classes. This means all classes have to be annotated as separate Entity objects and each needs to have an own unique Id. Besides the Record object, no other object has a single member that could be used as a primary key. This is a major problem as for example a object of type Attribute is only unique by creating a primary key over all member variables, which in addition are Lists. Class LiteralImpl has another problem, as the member _value is of type Object, which is represented as a Blob in the database. But Blobs cannot be used as part of a primary key

- Daniel Stucky : we could check if automatically generated Ids are useful in this scenario. This means that all Smila Entity objects have to add a new member variable (e.g. int _persistentId ). Most likely this approach still fails because of the recursive structure

PoC eclipseLink JPA RecordStorage using Oracle DB and Dao Objects for Smila data model

The basic idea is to use a simpler data model than the Smila data model for persistence with JPA, by storing des Smila data model as serialized data in this simplified data model. Thereby the restrictions concerning recursion and Collection/Map support should be solved.

For the class RecordImpl a Dao class will be implemented, that serializes the Record object into a member variable:

@Entity @Table(name = "RECORDS") public class RecordDao implements Serializable { @Id @Column(name = "ID") private String _idString; @Column(name = "RECORD") private byte[] _serializedRecord; protected RecordDao() { } public RecordDao(Record record) throws IOException { if (record == null) { throw new IllegalArgumentException("parameter record is null"); } if (record.getId() == null) { throw new IllegalArgumentException("parameter record has not Id set"); } final ByteArrayOutputStream byteStream = new ByteArrayOutputStream(); final ObjectOutputStream objectStream = new ObjectOutputStream(byteStream); objectStream.writeObject(record); objectStream.close(); _serializedRecord = byteStream.toByteArray(); _idString = record.getId().toString(); } public Record toRecord() throws IOException, ClassNotFoundException { final ObjectInputStream objectStream = new ObjectInputStream(new ByteArrayInputStream(_serializedRecord)); final Record record = (Record) objectStream.readObject(); objectStream.close(); return record; } }

In the database a table RECORDS will be created having the columns

- ID - VARCHAR

- RECORD - BLOB

The ID is a String representation of the Id of the Record. It us used as a primary key in the database. It is not used during deserialisation of the Record. The Id object itself is automatically serialized with the Record object. The interface RecordStorage would be left unchanged. Internally the eclipseLink EntityManager works with RecordDao objects instead of RecordImpl objects.

Enhanced Dao classes for restricted selections of attributes

For advanced uses cases there is a need to select records by queries. This could be realized by adding fixed and/or configurable Record attributes to the Dao class. These would be use to allow for filtering of selected attribute value pairs. They are not used to reconstruct the Record during deserialization. They are just data stored in addition to the serialized record.

A fixed attribute could be the source element of the Record Id. It could be used to select records based on the data source (use-case build index without crawling). The RecordDao would be enhanced by the following member variable:

@Column(name = "SOURCE") private String _source;

That would result in an additional column SOURCE in the table RECORDS.

For configurable attributes the RecordDao would be enhanced with a list of Dao objects for attribute values called AttributeDao:

@OneToMany(targetEntity = AttributeDao.class, cascade = CascadeType.ALL) @JoinTable(name = "RECORD_ATTRIBUTES", joinColumns = @JoinColumn(name = "RECORD_ID", referencedColumnName = "ID"), inverseJoinColumns = @JoinColumn(name = "ATTRIBUTE_ID", referencedColumnName = "ATT_ID")) List<AttributeDao> _attributes;

The AttributeDao class could be implemented like this:

@Entity @Table(name = "ATTRIBUTES") public class AttributeDao { @Id @GeneratedValue(strategy=GenerationType.AUTO) @Column(name = "ATT_ID") private String _id; @Column(name = "ATT_NAME") private String _name; @BasicCollection ( fetch=FetchType.EAGER, valueColumn=@Column(name="ATT_VALUE")) @CollectionTable ( name="ATTRIBUTE_VALUES", primaryKeyJoinColumns= {@PrimaryKeyJoinColumn(name="ATT_ID", referencedColumnName="ATT_ID")} ) private List<String> _values; protected AttributeDao() { } public AttributeDao(String name) { _name = name; _values = new ArrayList<String>(); } public void addValue(String value) { _values.add(value); } }

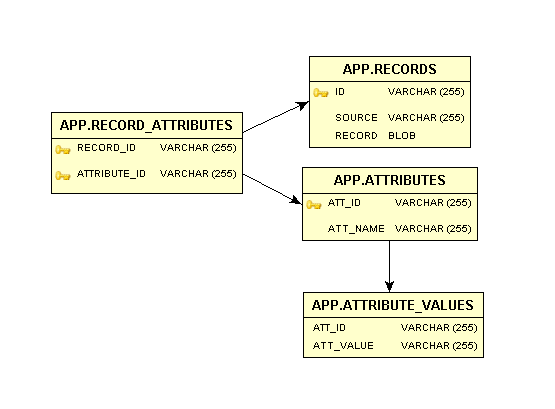

Summing up all modifications the final database schema could look like this:

By a configuration the RecordStorage is told which Record attributes it should persists in the database in addition to the serialized Record. Neither Annotations nor Sub-Attributes are supported in this approach, just the Literals of the Attribute are stored. Of course more advanced enhancements are possible (but were not in the scope of this PoC)

The RecordStorage interface could add the following methods to support simple queries. Another option is to introduce another interface, e.g. RecordQueries to leave the basic Record functionality in a separate interface.

interface RecordStorage { // simple query functionality Iterator<Record> findRecordsBySource(String source); Iterator<Record> findRecordsByAttribute(String name, String value); Iterator<Record> findRecordsByNativeQuery(String whereClause); }

The first method can be realized by a JPQL NamedQuery. This is just an Annotation of the RecordDao class used by the RecordStorage implementation:

@NamedQueries({ @NamedQuery(name="RecordDao.findBySource", query="SELECT r FROM RecordDao r WHERE r._source = :source"), })

An implementation of the method could look like this:

public Iterator<Record> findRecordsBySource(final String source) { Query query = _em.createNamedQuery("RecordDao.findBySource"); List<RecordDao> daos = query.setParameter("source", source).getResultList(); return new RecordIterator(daos.iterator()); }

The helper class RecordIterator is needed to convert the RecordDao objects into Records during iteration.

The second method is in JPA 1.0 not expressible as a JPQL NamedQuery Annotation, as it lacks the functionality to select values in a Collection. Therefore we have to use an eclipseLink enhancement and generate the query in java code:

public Iterator<Record> findRecordsByAttribute(final String name, final String value) { final Session session = JpaHelper.getServerSession(_emf); final ReadAllQuery query = new ReadAllQuery(RecordDao.class); ExpressionBuilder record = new ExpressionBuilder(); Expression attributes = record.anyOf("_attributes"); Expression criteria = attributes.get("_name").equal(name); criteria = criteria.and(attributes.anyOf("_values").equal(value)); query.setSelectionCriteria(criteria); query.dontUseDistinct(); List<RecordDao> daos = (List<RecordDao>)session.executeQuery(query); return new RecordIterator(daos.iterator()); }

- Daniel Stucky : At the moment there is a problem as the generated SQL query uses DISTINCT which is not allowed in conjunction with Blobs

- Daniel Stucky : solved by using query.dontUseDistinct();

The last method is a more generic variant that allows selection of Records via native SQL. As the results of the SQL query must be Record objects it is only allowed to enter the WHERE clause of the SQL statement which is combined with the static SELECT * FROM RECORDS

public Iterator<Record> findRecordsByNativeQuery(final String whereClause) { String sqlString = "SELECT * FROM RECORDS " + whereClause; Query query = _em.createNativeQuery(sqlString, RecordDao.class); List<RecordDao> daos = query.getResultList(); return new RecordIterator(daos.iterator()); }

All these enhancements are just suggestions. Other solutions to support selection of records via queries are possible.

PoC eclipseLink JPA RecordStorage using Derby DB and Dao Objects for Smila Data model

by using eclipseLink the concrete database used to persists the objects can easily be interchanged (provided that in the Entity mappings no database specific data types are used). Therefore it's quite easy to change the configuration from Oracle to Derby. In the file persistence.xml the following lines need to be changed:

<property name="eclipselink.jdbc.driver" value="org.apache.derby.jdbc.ClientDriver"/> <property name="eclipselink.jdbc.url" value="jdbc:derby://localhost:1527/smiladb"/> <property name="eclipselink.target-database" value="org.eclipse.persistence.platform.database.DerbyPlatform"/> <property name="eclipselink.jdbc.password" value="app"/> <property name="eclipselink.jdbc.user" value="app"/>

It is required that the file persistence.xml is part of the classpath of the bundle. Therefore in an OSGi scenario it is not really handy to use this file to configure the database to use for persistence. Instead we could use the same approach as ODE: the database properties are stored in a separate property file which is located in the regular configuration folder of the RecordStorage implementation bundle. These properties are read during activation of the service and are passed to the EntityManager. All static settings for the Persistence-Unit remain in file persistence.xml.

Serialization of Records

Java offers an easy way to serialize objects by implementing interface Serializable. Via an ObjectOutputStream arbitrary objects can be serialized in this way. This kind of serialization is used for example when using remote communication (e.g. RMI). Unfortunately we cannot use this default serialization for persisting the Record objects. We do not want to store the attachments of a Record with the Record, these are already stored by the BinaryStorage. But we want the attachments to be included in a remote communication. Therefore we cannot overwrite writeObject() of class RecordImpl. Instead, the serialization is done in the RecordDao constructor. Here not the complete record is serialized but only selected parts. That means that not the attachments but only the attachment names are serialized persisted:

public RecordDao(Record record) throws IOException { if (record == null) { throw new IllegalArgumentException("parameter record is null"); } if (record.getId() == null) { throw new IllegalArgumentException("parameter record has not Id set"); } List<String> attachmentNames = new ArrayList<String>(); for (Iterator<String> names = record.getAttachmentNames(); names.hasNext(); ) { attachmentNames.add(names.next()); } final ByteArrayOutputStream byteStream = new ByteArrayOutputStream(); final ObjectOutputStream objectStream = new ObjectOutputStream(byteStream); objectStream.writeObject(record.getId()); objectStream.writeObject(record.getMetadata()); objectStream.writeObject(attachmentNames); objectStream.close(); _serializedRecord = byteStream.toByteArray(); _idString = record.getId().toString(); _source = record.getId().getSource(); }

In method toRecord() the serialized parts are desterilized and a new Record object is created:

public Record toRecord() throws IOException, ClassNotFoundException { final ObjectInputStream objectStream = new ObjectInputStream(new ByteArrayInputStream(_serializedRecord)); final org.eclipse.smila.datamodel.id.Id id = (org.eclipse.smila.datamodel.id.Id) objectStream.readObject(); final MObject metadata = (MObject) objectStream.readObject(); final List<String> attachmentNames = (List<String>) objectStream.readObject(); final Record record = RecordFactory.DEFAULT_INSTANCE.createRecord(); record.setId(id); record.setMetadata(metadata); for (String name : attachmentNames) { record.setAttachment(name, null); } objectStream.close(); return record; }

It also may make sense to implement a JDK independent serialization of these objects.

PoC Blackboard using RecordStorage instead of XMLStorage

It is not a big deal to replace the XMLStorage with a new RecordStorage and adopt the Blackboard accordingly. We have to adopt the manifest, some methods, the declarative service description and of course the launch configuration. But there are some issues that need to be taken care of when implementing a RecordStorage:

- class AnnotatableImpl has a member of type org.apache.commons.collections.map.MultiValueMap that is not serializable. Thus the complete Record is not serializable. We have to find a replacement implementation

- JPA transactions must be handled safely (use commit and rollback)

- write access on the EntityManager must be synchronized, either by

- specifying the appropriate methods with synchronized. Not an ideal solution for our multi threaded architecture

- using a separate EntityManager per transaction. A global EntityManager instance could be used for all READ access and every WRITE transaction creates a new EntityManager (currently implemented)

- using an EntityManager per Thread (using ThreadLocal). As the RecordStorage will be implemented as an OSGi service, there is a problem during deactivation of the service. It has no access to the thread specific EntityManagers and can't close them and remove them from the ThreadLocal list. This may lead to memory leaks.

- it may happen that some data (Ids, attribute names or values) will exceed the maximum size reserved in a database field. There exists no real solution for this problem, perhaps we should always use the possible maximum size

- when used in an OSGi environment the EntityManager needs to have access to the JDBC driver classes. This means that the RecordStorage bundle manifest needs to import these packages (e.g. org.apache.derby.jdbc). Functional this is not a problem, but this is a hard dependency on a JDBC driver and it is not possible anymore to use another driver by just configuring the database properties. The driver has to be included in the bundle's manifest first !

- Daniel Stucky: I posted a question concerning this behavior to eclipseLink ( [http://dev.eclipse.org/mhonarc/lists/eclipselink-users/msg02010.html].). The issue may be solved in a future release.

Links

- [http://db.apache.org/derby/] (Derby homepage)

- [http://wiki.eclipse.org/EclipseLink/] (EclipseLink homepage)

- [http://jcp.org/en/jsr/detail?id=220] (JPA Specification)