Notice: this Wiki will be going read only early in 2024 and edits will no longer be possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

Difference between revisions of "STEM Solvers"

| Line 73: | Line 73: | ||

=== Stochastic Solver === | === Stochastic Solver === | ||

| + | New in STEM 2.0, you can run STEM models stochastically. Some infectious processes are by nature stochastic. In a stochastic model, all transitions (e.g. between infectious compartments) are drawn from a discreet binomial distribution (using the Apache Commons math library). As a consequence, only an integer count of humans (or animals) are transitioned from one state to another. This is also what takes place in the real world. | ||

| + | |||

| + | The stochastic solver in STEM takes one input parameter, the stochastic seed. Varying the seed from one run to another will results in a different outcome and running multiple simulations varying the seed can give you an indication of the range of results possible. If the stochastic seed is the same between two runs the output of the model is guaranteed to be identical. | ||

Revision as of 06:58, 17 June 2013

When you create a new scenario in STEM, you need to specify which solver to use. A solver is simply the method used to determine how the state of a simulation changes from one time step to the next. Models for populations and diseases in STEM are all designed to carry out this change or derivative calculation given a current state. How the derivative is applied to determine the next state is where solvers differ.

Available Solvers

Users can select from STEM Native Solvers or the Apache Commons Math Solvers. The Native Solvers offer speed and ease of use. The Apache Solvers involve more overhead but can offer greater accuracy.

STEM Native Solvers

Finite Difference

The finite difference solver is the most straightforward (and fastest) solver available. It uses Euler's method and simply estimates the next value from the current value plus the derivative:

y(t+h) = y(t) +h*y'(t)

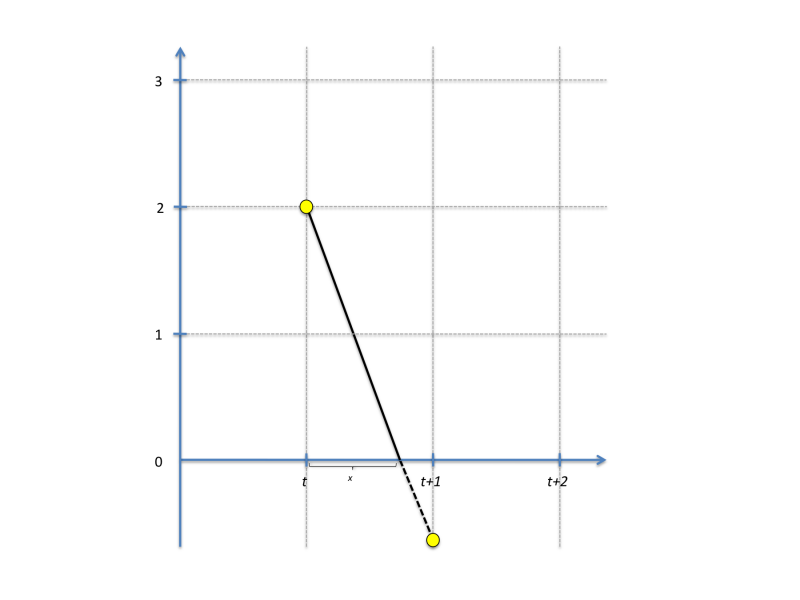

where h is the step size (default 1 day in STEM as defined by the sequencer). Label values in STEM are often constrained to a positive value. For instance, the count of a population can never go negative, and this is also true for any population assigned to a particular compartment state. When a constrained value goes negative, the finite difference solver does a partial step to avoid this as illustrated in this figure:

(the figure assumes h = 1). The next value is thus calculated as:

y(t+h) = y(t) + x*y'(t)+(1-x)*y'(t+x)

The algorithm finds the smallest x among all differential equations solved and rescales all label values accordingly. If necessary, multiple partial steps are carried out.

The finite difference solver is able to take advance of multi-core CPUs; each CPU is assigned a sub-set of the graph to work on.

Runge Kutta Cash-Karp

The Runge Kutta Cash-Karp solver is an adaptive step size ordinary differential equation integrator that calculates six function evaluations for each time step and estimates two solutions (of 4th and 5th order). The difference between the two solutions is the estimated error, and the solver can be calibrated to any desired degree of accuracy using the Relative Tolerance parameter. If the error exceeds the tolerance the step size is reduced. The Runge Kutta Cash-Karp solver can take advantage of multi-core CPUs, and each CPU is assigned a sub-set of the graphs to work on. The algorithm perform CPUs synchronization to establish the step size to use, ensuring that the same solution is reached no matter how many CPUs are running concurrently.

Apache Commons Math Solvers

These solvers use the Apache Commons Math library and its classes for solving ordinary differential equations. There is some overhead in using these solvers since the STEM data models (graphs/labels etc.) need to be adapted to the APIs expected by the Apache integrators. The advantage to using solvers from this list is that they (especially the Gregg Bulirsch Stoer and Dormand Prince 853) can be more accurate than the STEM Native Solvers. The Apache solvers are maintained and tested independently by the Apache Commons Math community.

Since the 1.4.1 release, all these solvers are able to take advantage of multi-core CPUs to increase performance.

All Apache solvers takes four parameters, Relative Tolerance, Absolute Tolerance, Min Step, and Max Step. Relative and Absolute Tolerance determine the tolerance in the estimated error, indirectly controlling the step size. Min Step and Max Step determine the minimum and maximum step size allowed. If the error tolerance cannot be satisfied by reducing the step size to the minimum allowed, an error is thrown and the simulation stops. The unit of the Max Step and Min Step parameters is in simulation cycles, i.e. one step of the sequencer.

Dormand Prince 853

This integrator is an embedded Runge-Kutta integrator of order 8(5,3) used in local extrapolation mode (i.e. the solution is computed using the high order formula) with stepsize control (and automatic step initialization) and continuous output. This method uses 12 functions evaluations per step for integration and 4 evaluations for interpolation.

This method is based on an 8(6) method by Dormand and Prince (i.e. order 8 for the integration and order 6 for error estimation) modified by Hairer and Wanner to use a 5th order error estimator with 3rd order correction. This modification was introduced because the original method failed in some cases (wrong steps can be accepted when step size is too large, for example in the Brusselator problem) and also had severe difficulties when applied to problems with discontinuities. This modification is explained in the second edition of the first volume (Nonstiff Problems) of the reference book by Hairer, Norsett and Wanner: Solving Ordinary Differential Equations (Springer-Verlag, ISBN 3-540-56670-8).

It is described in the Apache documentation.

Dormand Prince 54

Another solver from the Dormand Prince family, originally described in:

A family of embedded Runge-Kutta formulae.

J. R. Dormand and P. J. Prince.

Journal of Computational and Applied Mathematics

volume 6, no 1, 1980, pp. 19-26

This integrator is an embedded Runge-Kutta integrator of order 5(4) used in local extrapolation mode (i.e. the solution is computed using the high order formula) with step size control. This method uses 7 functions evaluations per step but since the last evaluation of a time step is the same as the first evaluation in the next time step, one evaluation can be avoided.

More details in the Apache Documentation.

Gregg Bulirsch Stoer

This solver implements a Gragg-Bulirsch-Stoer integrator for Ordinary Differential Equations. The Gragg-Bulirsch-Stoer algorithm is one of the most efficient ones currently available for smooth problems. It uses Richardson extrapolation to estimate what would be the solution if the step size could be decreased down to zero.

More details in the Apache Documentation.

Highman Hall 54

The solver implements the 5(4) Higham and Hall integrator for Ordinary Differential Equations.

This integrator is an embedded Runge-Kutta integrator of order 5(4) used in local extrapolation mode (i.e. the solution is computed using the high order formula) with stepsize control (and automatic step initialization) and continuous output. This method uses 7 functions evaluations per step.

More details in the Apache Documentation

Stochastic Solver

New in STEM 2.0, you can run STEM models stochastically. Some infectious processes are by nature stochastic. In a stochastic model, all transitions (e.g. between infectious compartments) are drawn from a discreet binomial distribution (using the Apache Commons math library). As a consequence, only an integer count of humans (or animals) are transitioned from one state to another. This is also what takes place in the real world.

The stochastic solver in STEM takes one input parameter, the stochastic seed. Varying the seed from one run to another will results in a different outcome and running multiple simulations varying the seed can give you an indication of the range of results possible. If the stochastic seed is the same between two runs the output of the model is guaranteed to be identical.