Notice: this Wiki will be going read only early in 2024 and edits will no longer be possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

Difference between revisions of "SMILA/Documentation/Architecture Overview"

(→Architecture Overview) |

(→Description) |

||

| Line 16: | Line 16: | ||

<li> | <li> | ||

<p> | <p> | ||

| − | The '''''preprocessing''''' process generally includes the interaction with the data source either | + | The '''''preprocessing''''' process generally includes the interaction with the data source either by pulling data by crawlers and pushing it into the system via the BulkBuilder module. The information that can be pushed into the framework is in general document's metadata, content and diverse security relevant information i.e. access rights. |

</p> | </p> | ||

<p> | <p> | ||

| − | The | + | The bulkbuilder is the entry point to the asynchronous job management and persists the data in dedicated stores for further processing. A bulk is a bunch of records that is processed by various ''workers'' that are orchestrated via an asynchronous ''workflow''. Such a workflow can be instantiated by defining a ''job'' and the execution ob such a job is called a ''job run''. |

</p> | </p> | ||

<p> | <p> | ||

| − | + | For better crawl performance, a crawler (e.g. file system crawler or web crawler) is now implemented as a set of different workers that are running the the asynchronous job management, too. This makes it possible to run the several steps of a crawler in parallel (even on multiple hosts). The complete preprocessing therefore consists of two jobs: One for ''extracting'' the raw data from the data source into SMILA, and one for ''transforming'' it and ''loading'' it into some target, e.g. an index. | |

</p> | </p> | ||

<p> | <p> | ||

| − | + | An indexing client can also use the REST API to push JSON objects (i.e. a document's metadata) and the document contents into the bulkbuilder. | |

</p> | </p> | ||

<p> | <p> | ||

| − | Metadata | + | Metadata, access rights and document contents are stored in the object store. Beside these two storages, SMILA also offers a DeltaChecker worker for keeping information about the state objects/documents during a crawling of a data source so that in follow-up crawl runs only changed objects are pushed in to the transformation workflow. Ontology store is a dedicated store for persisting and managing ontologies. The Blackboard service represents a high level API for accessing record information by BPEL pipelines. |

| − | Ontology store is a dedicated store for persisting and managing ontologies. The Blackboard service represents a high level API for accessing record information by BPEL pipelines. | + | |

</p> | </p> | ||

<p> | <p> | ||

Revision as of 11:13, 10 January 2012

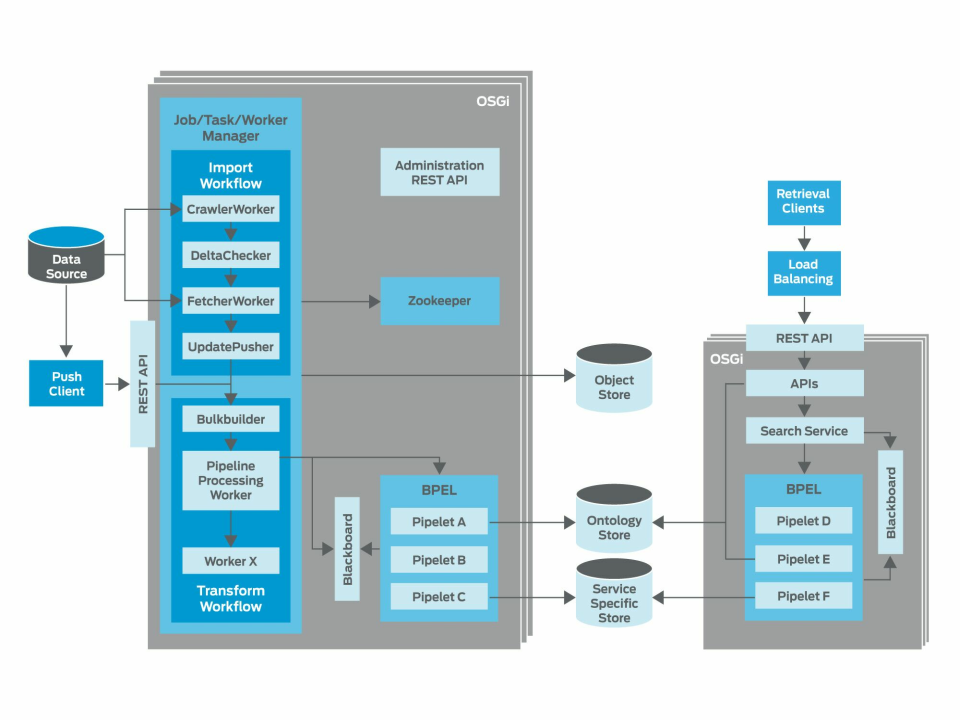

This page describes the short overview of SMILA's current architecture.

Introduction

SMILA is a framework that runs on top of OSGi runtime and therefore follows its component model.

Architecture Overview

Description

This architecture overview depicts generally two processes: preprocessing and information retrieval.

Note: In case where SMILA is used for building a search application, we talk about indexing and search process.

-

The preprocessing process generally includes the interaction with the data source either by pulling data by crawlers and pushing it into the system via the BulkBuilder module. The information that can be pushed into the framework is in general document's metadata, content and diverse security relevant information i.e. access rights.

The bulkbuilder is the entry point to the asynchronous job management and persists the data in dedicated stores for further processing. A bulk is a bunch of records that is processed by various workers that are orchestrated via an asynchronous workflow. Such a workflow can be instantiated by defining a job and the execution ob such a job is called a job run.

For better crawl performance, a crawler (e.g. file system crawler or web crawler) is now implemented as a set of different workers that are running the the asynchronous job management, too. This makes it possible to run the several steps of a crawler in parallel (even on multiple hosts). The complete preprocessing therefore consists of two jobs: One for extracting the raw data from the data source into SMILA, and one for transforming it and loading it into some target, e.g. an index.

An indexing client can also use the REST API to push JSON objects (i.e. a document's metadata) and the document contents into the bulkbuilder.

Metadata, access rights and document contents are stored in the object store. Beside these two storages, SMILA also offers a DeltaChecker worker for keeping information about the state objects/documents during a crawling of a data source so that in follow-up crawl runs only changed objects are pushed in to the transformation workflow. Ontology store is a dedicated store for persisting and managing ontologies. The Blackboard service represents a high level API for accessing record information by BPEL pipelines.

After one bulk has been completed by matching configured time or size constraints, the bulk is released and the JobManager will determine follow up tasks for the next worker(s) as defined by the workflow of the active job.

The WorkerManager listens for available tasks from the TaskManager and let them be processed by its workers. These include PipelineProcessorWorkers that execute synchronous BPEL workflows. These workers initialize a Blackboard with the records to be processed and start a BPEL engine which executes desired workflow. The workflow again is defined by the order of execution of some services either provided by the framework itself or implemented by application's developer.

Since the job processing synchronizes itself via ZooKeeper across the whole cluster, the tasks can be executed on different nodes in the cluster, so the preprocessing can easily be spread and therefore parallelized across the whole cluster (provided that the storages are accessible from each node in the cluster). Thus the asynchonous job processing components are the central framework components which enable horizontal scaling of the preprocessing process in the framework. Workers can also be configured to process multiple tasks in parallel on one single node.

-

The information retrieval provides a swift access to previously preprocessed and stored information. Since this process is synchronous there has to be some external component responsible for distributing the load and therefore enabling the horizontal scalability of the information retrieval process. The flexible definition and execution of application's business logic is provided here also by calling a BPEL engine with a desired workflow.

Hint: For initial architecture proposal please see the archived version.

Original slides can be found here: SMILA Architecture.zip

Component Documentation

For further up to date documentation of all implemented components please see:

| Component Documentation |